On Monday, the Finnish Ministry of Education and Culture held a public hearing on the implementation of Article 17 of the Copyright Directive. As part of this meeting, the Ministry outlined its proposal for a user rights-preserving “blocking procedure” that substantially deviates from all other implementation proposals that we have seen so far.

The procedure presents a radical departure from the approach that is underpinning other user rights-preserving implementation proposals (such as the Austrian and German proposals) and the Commission’s proposed (and much delayed) Article 17 implementation guidance. Instead of limiting the use of automated filters to a subset of uploads where there is a high likelihood that the use is infringing, the Finnish proposal does away with automated blocking of user uploads entirely, but not with automated detection of potential infringements.

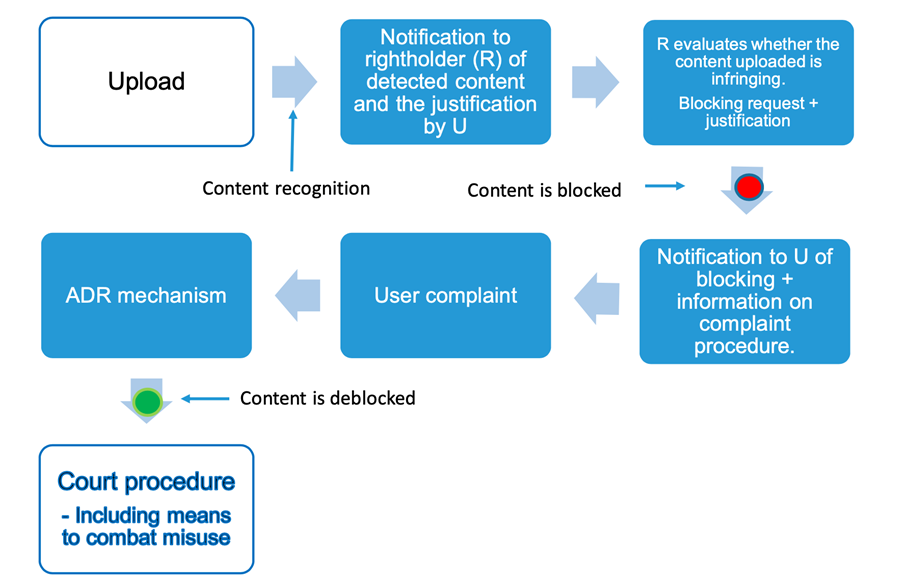

The Finnish proposal relies on mandatory use of content recognition technology by platforms and the rapid notification of rightsholders of uploads that match works for which rightsholders have provided them with reference information. However, platforms are only required to disable access to uploaded content after rightsholders have provided them with a properly justified request to block a particular upload:

While this approach bans automated filtering of user uploads, it still heavily relies on automated content recognition technology. The proposed “blocking procedure” requires that all platforms covered by Article 17 would need to have technology in place that can match uploads to reference information provided by rightsholders so that rightsholders can be directly notified when matching content is uploaded. Notifications sent to rightsholders also include the justifications that uploaders have provided at the time of upload as to why they consider a use of third-party content to be legitimate.

Based on these notifications rightsholders have to evaluate if the upload infringes copyright or not taking “due note of the reasoning provided by the uploader”. If rightsholders come to the conclusion that an upload is infringing, they can issue a blocking request to the platform, which has to disable access to the upload (otherwise it will be directly liable for copyright infringement). The platform also needs to notify the uploader and provide it with a copy of the blocking request. The uploader can then challenge the blocking of the upload through an independent Alternative Dispute Resolution (ADR) body. Outcomes of the ADR process are binding on the platform but can be challenged in court by either uploaders or rightsholders (see slides 14-26 of this presentation for a full overview of the process).

A Particular balance

The Finnish proposal strikes a particular balance. While banning fully automated content blocking constitutes a very strong fundamental right preserving move, the proposal also makes rightsholders – who have an interest in any dispute over the use of their protected works in user uploads – the initial arbiter of such disputes. As explained during the hearing, the Ministry of Culture settled on this approach in order to comply with the requirement in Article 17(9) that

Where rightsholders request to have access to their specific works or other subject matter disabled or to have those works or other subject matter removed, they shall duly justify the reasons for their requests.

So far Member States (and the Commission itself) have interpreted this provision to only apply in the context of the complaint and redress mechanism established by Article 17(9). User rights advocates have repeatedly highlighted the fact that this contextual limitation is not supported by the text of the Article and that therefore this provision must be interpreted to mandate that all blocking and removal requests (including the initial ones) must be duly justified by the requesting rightsholders. Based on Finland’s support for this literal reading of the obligation, fully automated takedowns become impossible to reconcile with the text of the directive.

Unfortunately, banning fully automated blocking does not solve all user rights concerns. Tasking rightsholders with assessing if a particular use of their works is infringing or not gives rightsholders a lot of power over a user’s speech that is ripe for abuse. In recognition of the power imbalance between users and rightsholders, the Ministry has included a number of ex-post checks on their power: users have access to an impartial ADR mechanism, right holders can be held liable for damages or harm (not only economic) caused by wrongful blocking, and courts can bar rightsholders from using the blocking functionality if they repeatedly make wrongful blocking requests.

The Ministry made it clear that it sees the ADR mechanism as a central element of its proposal and has clearly given this element a lot of thought: the body would be independent of both platforms and rightsholders, would publish its decisions which would be binding on platforms and it would have the power to recommend compensation for harm and/or damages caused to the user.

While this proposal sets a new benchmark for the out-of-court complaint and redress mechanism required by the directive, its usefulness will largely depend on the ease with which users will be able to bring disputes in front of this body. For the ADR mechanism to offer meaningful protection to users from rightsholder overreach, the proposal requires users to appeal to the ADR body via a relatively complex process (see slide 30 from the presentation). While it is impossible to predict how uploaders will react to removals that they consider unjustified at this stage, it seems entirely plausible that instead of engaging with a complex system they will simply give up.

A clear win for large platforms?

So what would the proposed “blocking procedure” mean for the different stakeholders? It seems clear that the main beneficiaries will be large platforms: The proposed mechanism would push the responsibility for determining the legitimacy of the use of third party content to users and rightsholders. The role of platforms is reduced to implementing blocking decisions taken by rightsholders and the decisions of the ADR body. As long as they act in line with these decisions, their liability risk is reduced to zero.

This relatively privileged position of platforms comes at the expense of being required to implement automated content recognition technology that can do the initial content matching. Requiring platforms to have such technology in place may not be a problem for larger platforms but will likely represent a much bigger challenge (in terms of costs) for smaller platforms especially if they have to deal with multiple types of content at once.

For rightsholders and users, the picture is less clear. The Finnish model allocates a lot of power to rightsholders, who have the right to decide whether uploads containing their works must be taken down or not. On the flip side, having to justify every single blocking request will require a lot of resources (without resulting in a corresponding increase in revenues).

For users, having rightsholders assessing if their uploads are legitimate – instead of being subject to automated decisions – is not necessarily an improvement. Obviously, rightsholders are not neutral arbiters when deciding whether their own rights have been infringed, and are therefore unlikely to invest resources to ensure that user rights are respected. While users, and potentially also the organizations that represent them, would have the possibility of challenging wrongful blocking in court, they will not be able to force rightsholders to change their decision parameters, even if they lead to systematic overblocking. The only remedies available to discourage systematic overblocking are claims of damages to be paid by rightsholders for the harm caused by individual cases of wrongful blocking, and (at the very end of the line) the possibility for courts to exclude rightsholders from being able to issue blocking requests in case of repeated wrongful claims.

Furthermore, the proposal requires substantial efforts from uploaders who want to exercise their right to use third party content under exceptions and limitations or who want to make sure that other types of legitimate uses are not wrongfully blocked. The model is based on the idea that users justify their use of third party content at upload. While this may make sense when sharing more elaborate creations, it seems unrealistic and unwanted to expect users to include justifications with every upload in more casual sharing contexts common on many social media platforms. Even if users make the effort of providing justifications for their use of third-party content, they have no guarantee that rightsholders will actually take those justifications into account when issuing blocking requests. In addition, the amount of information that users need to provide for “deblocking requests” will likely discourage a substantial portion of uploaders from trying to contest unjustified blocking by rightsholders.

For both rightsholders and users, this means that the proposed system will only work if the number of uploads that give rise to blocking requests is very limited. The number of blocking requests will be a function of the ability of platforms and rightsholders to conclude comprehensive licensing arrangements. Unless rightsholders are willing to comprehensively license platforms (even in sectors like the AV sector where this goes against current business practices) they will need to deal with substantial amounts of notifications that they will need to process if they want to effectively exercise their rights.

Despite these potential shortcomings, the proposal by the Ministry of Education and Culture is another interesting contribution to the implementation discussion. It clearly shows that governments are struggling with reconciling Article 17 with national systems of fundamental rights protections. The Finnish government is the third government to make it clear that simply transposing the language of Article 17 into national legislation is not a viable option to implement the directive in a fundamental rights preserving way. The approach presented on Monday may not be perfect yet, but it is another approach that shows a real effort on behalf of a national legislator to protect users’ rights.

This post was first published on the COMMUNIA website.

_____________________________

To make sure you do not miss out on regular updates from the Kluwer Copyright Blog, please subscribe here.

Kluwer IP Law

The 2022 Future Ready Lawyer survey showed that 79% of lawyers think that the importance of legal technology will increase for next year. With Kluwer IP Law you can navigate the increasingly global practice of IP law with specialized, local and cross-border information and tools from every preferred location. Are you, as an IP professional, ready for the future?

Learn how Kluwer IP Law can support you.