For anyone interested in the discussions about automated content filtering, Christmas came early this week: On Monday YouTube published the first edition of its Copyright Transparency Report. The report that covers copyright enforcement actions on the platform for the period from January to June of this year provides much needed insights into how YouTube’s various copyright management systems function. In publishing this report YouTube is finally bringing some empirical evidence to the discussion about the automated content filtering that is being fuelled by the ongoing implementation of Article 17 of the Copyright in the Digital Single Market (CDSM) directive. In this context it is worth pointing out that the report published on Monday covers a period before the most national implementation of Article 17, which means that the numbers presented in the report reflect the status quo ante and can thus serve as a baseline for assessing the actual impact of Article 17 going forward.

Over-blocking is real

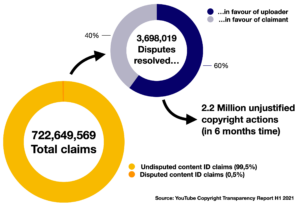

So what can we learn from this first copyright transparency report? The overall take-away is that automated content removal is a big numbers game. In total YouTube processed 729.3 million copyright actions in the first half of 2021 of which the vast majority (99%) were processed via Content ID (as opposed to other tools, such as Copyright Match Tool and the Webform). And while YouTube claims that ContentID is much more accurate and less prone to abuse than its other systems ContentID has still received 3.7 million disputes from uploaders claiming that the actions (these can be blocks/removals but also demonetisation actions) taken against them are unjustified. 60% of these disputes have ultimately been decided in favour of the uploaders, which means that in the first half of 2021 Content ID has generated at least a 2.2 million unjustified copyright actions against its users on behalf of rightholders.words, [1] In other words, over-enforcement (both unjustified blocking and unjustified demonetisation) is a very real issue that affects the rights of a substantial number of uploaders on a regular basis.

This number alone makes it very clear that concerns about over-blocking by automated upload filters are very much grounded in reality and underline the importance of strong ex-ante safeguards in national implementation of Article 17. It will be interesting to see how these numbers will change once Article 17 has been widely implemented. For instance, how will these numbers be affected once YouTube has adapted its automated filters to implement ex-ante safeguards such as the German rules on treating fragments shorter than 15 seconds as presumably legal or, more crucially, what will be the impact of limiting permissible ex ante filtering only to “manifestly infringing” content, as partly endorsed by the Commission’s Guidance and proposed by Advocate Øe in Case C-401/19 . In order to better understand the impact of such measures it would be helpful, if future editions of the transparency report would break out the numbers for the EU 27 — unless Youtube implements the required changes globally.

A Brussels effect?

There are signs in the transparency report that YouTube is indeed making global changes to its copyright management system to comply with the provisions of the CDSM Directive. The report details how YouTube has implemented compliance with the obligations imposed on OCSSPs in Articles 17(4) b and c. Rather than giving all rightsholders access to its most powerful rights management tool—access to Content ID remains limited to “movie studios, service providers and other publishers that have heavy reposting of copyrighted content”—YouTube has expanded access to the Copyright Match Tool, the use of which was previously limited to uploaders who are enrolled in the YouTube Partner Programme (YPP):

In October [2021], we upgraded the Copyright Match Tool to also find reuploads of videos removed through the webform, so that the tool isn’t only effective for and available to those willing to upload their video content onto YouTube. At the same time, we expanded access of this feature to any rightsholder who has submitted a valid copyright removal request through the webform, so that they will be shown subsequent reuploads of the videos they reported for removal. Since the expansion took place after the reporting period, the data in this report does not reflect these changes.

So, the Copyright Match Tool allows any rightsholders to automatically detect uses of works for which they have provided reference files (in the the form of videos uploaded to YT) and to prevent re-uploads of works that they have previously had requested to remove manually via the Webform. From what is disclosed in the transparency report it seems that rightsholders who do not have access to Content ID will not be able to automatically block uploads containing new uses of their works once they have been detected by the copyright match tool. Instead they need to manually “choose to archive the match and leave the video up, file a takedown request, or contact the uploader ”. While the need for manual intervention by the right holder before removal is very welcome from the perspective of protecting the rights of uploaders, this construction does raise the question why YouTube should be able to give one class of rightholders (those with access to Content ID) the ability to automatically remove matches without human intervention, while requiring human intervention from all others.

In any case it seems clear that the above described changes to the Copyright Match tool are directly inspired by the need to comply with Article 17 in EU Member States and it is interesting to see that this seems to have inspired changes to YouTube’s global approach to copyright management. This is another example of a trend in which global platform regulation standards are effectively set by the first legislator willing to act (as long as the market under control by that legislator is sufficiently big) — something that has also been referred to as the Brussels effect in relation to the GDPR.

Matching at upload time

Another (global) change to the YouTube’s Copyright management system is that YouTube now claims to be able to match content in real time:

We offer a number of methods for uploaders to address Content ID claims they receive. As of March 2021, uploaders are notified through our Checks functionality during the video upload process whether there is material in their video that may result in a Content ID claim. Before they publish the video, they may edit out the content or use the dispute process described below. These options are also available after a video is published and claimed.

The ability to do real-time matching is an important technical capacity that has been implicitly assumed to exist by earlier proposals for a user rights-preserving implementation of Article 17 (here and here) and subsequent national implementations of the directive. Without this ability it would be impossible to make distinctions between manifestly infringing and not manifestly infringing uploads at the point of upload, an ability on which these proposals and implementations—as well as the European Commission’s implementation guidance—hinge. The fact that Content ID only gained this capacity in March of this year should raise questions on how far it is proportionate for small and medium sized platforms to implement technical measures to comply with Article 17.

All in all, YouTube’s copyright transparency report is a promising start. It finally provides some of the data that YouTube (and other platforms) should have provided for the purposes of the Article 17 stakeholder dialogue. Still the maxim of better late than never clearly applies here, especially since this first edition, covering the last six months before the implementation deadline of the CDSM directive, does provide some much needed baseline data to assess the impact of Article 17 as platforms start to bring their systems into compliance. For subsequent editions to be even more useful it would be welcome if YouTube could break out information per country/territory and could provide more detailed information on the type of matches that have triggered automated actions.

[1] The real number is likely to be much higher since research into notice and take down systems suggests that only a small fraction of users whose lawful uploads are removed make use of a complaint procedure that is available to them. Compare: Urban, Jennifer M. and Karaganis, Joe and Schofield, Brianna and Schofield, Brianna, Notice and Takedown in Everyday Practice (March 22, 2017). UC Berkeley Public Law Research Paper No. 2755628, Available at SSRN: https://ssrn.com/abstract=2755628 or http://dx.doi.org/10.2139/ssrn.2755628

CC BY 4.0

________________________

To make sure you do not miss out on regular updates from the Kluwer Copyright Blog, please subscribe here.